Cluster-based LLM Router

Switch from a single LLM to a suite of your preferred LLM models, ensuring optimal performance and cost efficiency for every prompt

OUR SOLUTION

How it works

CLUSTER-BASED ROUTING

Tailored to your unique queries

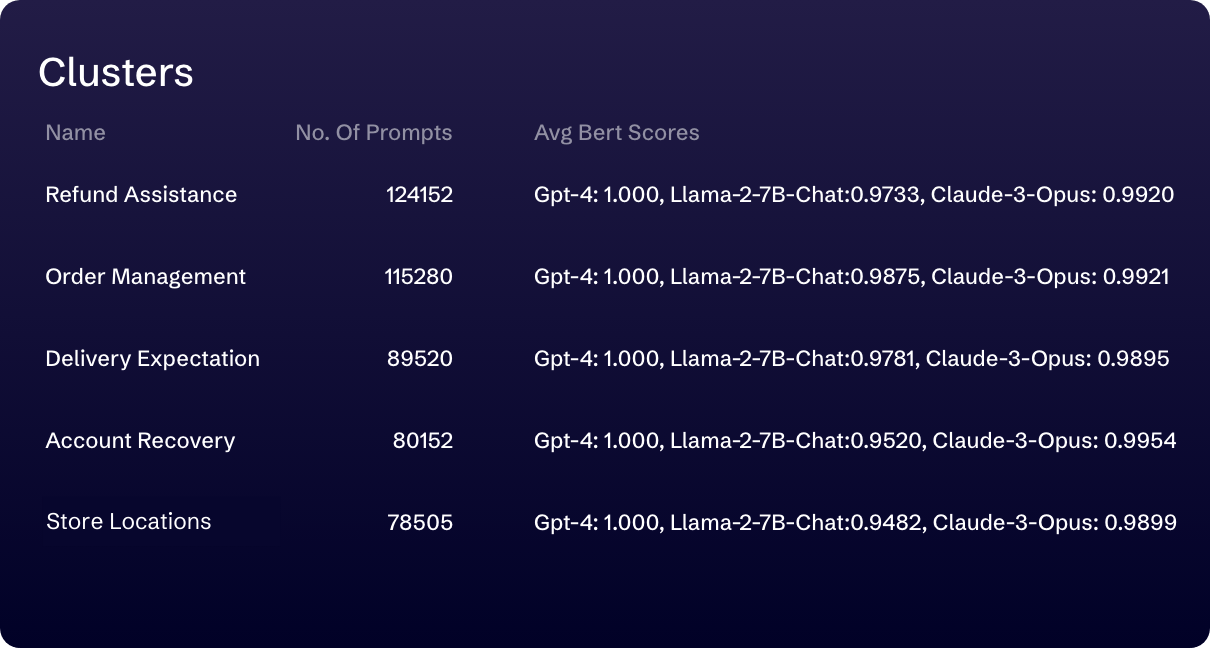

Rather than relying on generic benchmarks, our LLM Router instantly evaluates LLM model performance and costs using your specific data. It intelligently segments your data into semantically similar clusters, each representing a distinct use case. For each cluster, it evaluates LLM models against your preferred model, and automatically routes queries to the most suitable LLM models.

CUSTOMIZABLE SETTINGS

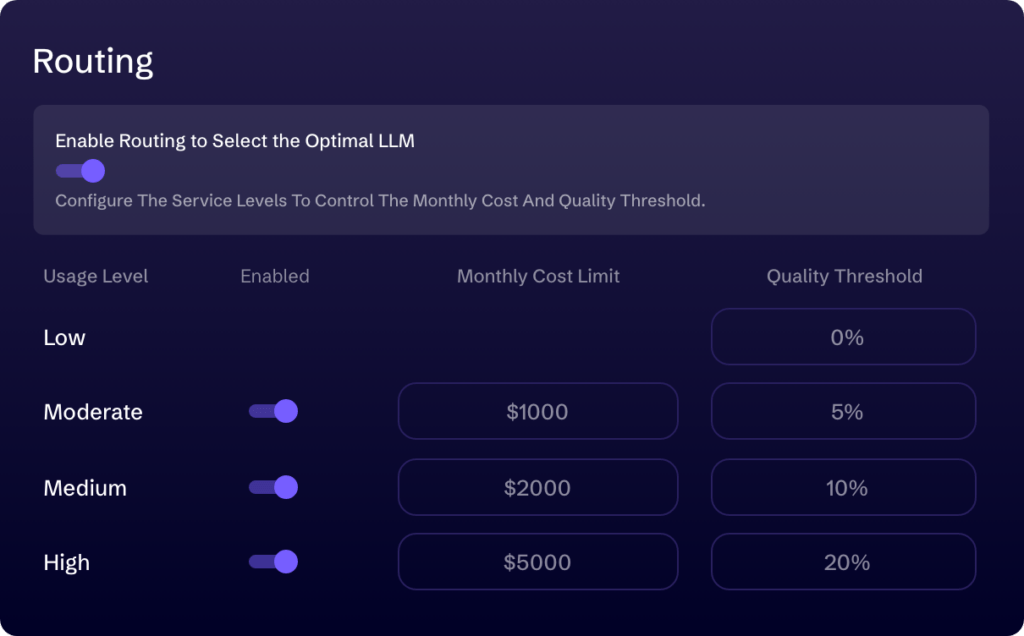

Set your ideal performance - cost balance

Our LLM Router allows you to determine the balance between performance and cost to achieve your desired cost-effectiveness. When output quality is paramount, set a high-quality score threshold for LLM routing, or activate the LLM Router only as you approach your budget limits for optimal results. To understand quality differences across models, you can check responses from various LLM models, along with their dedicated quality scores, before actively routing your queries.

ROUTING HISTORY

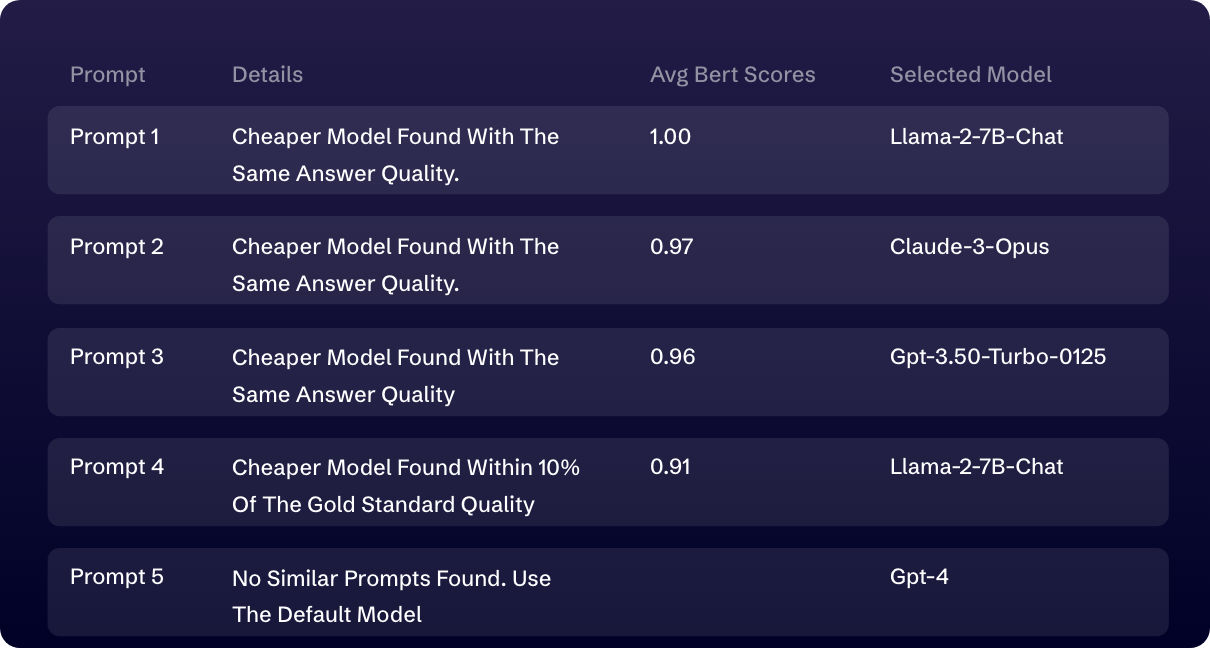

Gain 100% transparency in LLM routing

With an intuitive interface that displays all prompts, the selected model for each, and its quality score relative to your preferred model, you’ll gain a transparent view of our LLM Router’s performance. This enhances your confidence in LLM routing and enables informed decisions.